From SERPs to PageRank. A Guide to Search Engine Optimisation

Search Engine Optimisation, or SEO, is the business of making your website as visible as possible in a search engine's organic results. Organic results are those that show naturally when you search, and they are shown because a site is deemed an authority for a given search query. The higher the ranking, the better the website is seen to answer the user's query. The other results you see are paid ads showing 'sponsored' or 'ad' above them, and while they still have a quality-related element to how they rank, there's also a financial element, that's not there for organic results. So as a business you build a great site, publish a blog every day/week and the search engines will love it, propelling your business sky-high in the organic search engine rankings? In theory, but it takes a bit more thought.

So, What Are Search Engines?

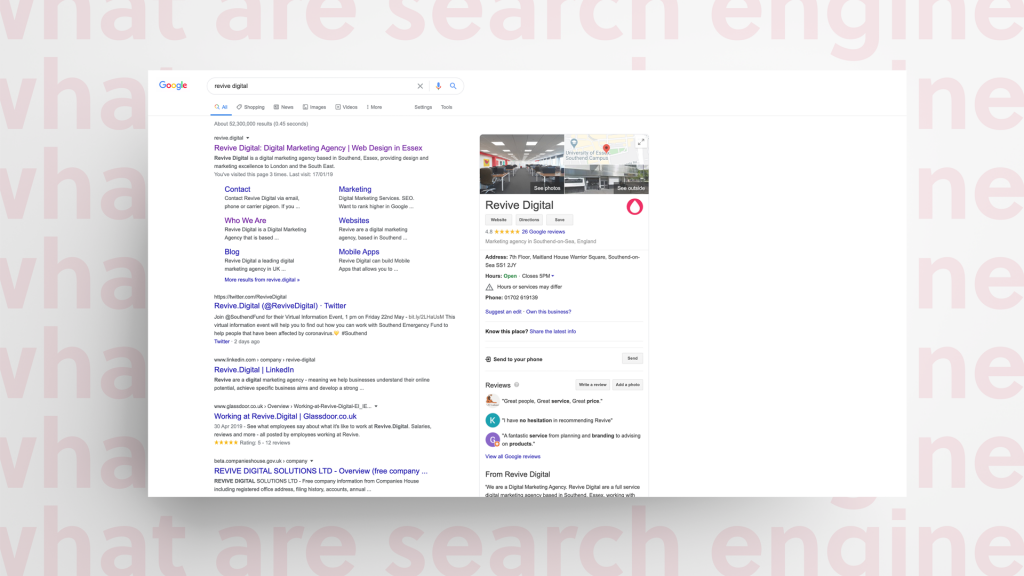

The world's most widely used search engine has become such a part of daily life it's now a verb. If you want to find out 'such and such' you simply 'Google' it. There are other search engines but Google, Bing and Yahoo, are the biggest three (Google has around 92% market share of all searches. Second place Bing has just 2.4% so you'll always want to optimise for Google first). These search engines are online repositories that store billions of web pages and when an internet user searches for something, the search engine raids those stores of web pages to find the most relevant results for the query. The results displayed are called SERPs – Search Engine Results Pages. It's a digital filing cabinet.

What the Algorithm???

Sites that contain the most relevant content for a user's search intent get ranked higher than those that don't. And as your site drops away from the top three organic results in a search engine, it's less likely a user will click. Less clicks means less visitors on your website, less visitors means fewer sales. But how does a search engine check all the pages it stores in its index? It uses computer programs called algorithms. These algorithms use a variety of factors to decide how relevant a page is to a user's search query and are famous for being a mystery. No-one knows for sure what goes into the algorithms that create ranking factors (although it's generally agreed Google uses more than 200 factors). Google does however let out clues every so often, but almost everything SEO marketers know about Google's algorithm has been gleaned through trial and error. The weight given to each factor depends on things like location, intent, how fresh the content is and how authoritative the content is. More technical issues such as ease of navigation, site speed and ease of use on different devices is also taken into account... as well as at least 193 other factors.

Understanding Search Engine Optimisation

Firstly, all search engines and such have different ranking algorithms. But they all have one thing in common – they want to deliver the best possible search results to keep users coming back to their search engine. They do this by sending out 'spiders' to crawl the webpages of each site, taking a snapshot for the algorithm to decide how the pages for a particular search term should rank. So this is where we need to start talking about links...

SEO Backlinks and Internal Links

When the spiders crawl a website they follow links and it's part of the reason why links are given to be so important in SEO ranking factors. If a spider lands on a site that has a link to your site, the spider will do one very important thing... … it will follow that link to your site and add it to the index. That means when someone Googles a search query relevant to that page on your site, it will rank that page as a source of information – it will show it in the search results.

Which leads to another point:

Google ranks pages not entire sites...

It's worth noting that even this view is perhaps considered outdated. As expert optimiser Neil Patel puts it, the focus is now on how people engage with a particular product or service and the ROI rather than being focussed on page rankings and keywords (and we're using Google here as the example because of that huge 92% share of the search market).

Spiders and Web Crawling in Search Engine Optimisation

Now supposing your crawled page has no internal links, (those linking to other parts of your site). What will the spiders do? They'll stop at that single page. And that's a waste of some great content. But... If you've got good internal linking (and that means relevant links too) the spiders will follow those links, and add the pages to the index. Some things can block the spiders. For example 'no follow' instructions tell the spiders not to follow a particular link, while robots.txt is a site's own list of pages that can be crawled. And we've already noted how pages with no internal links are unlikely to get crawled. Optimising your pages with links is one part of the SEO process. Imagine if you have a site with hundreds of product pages, useful content and blogs? You want it to get read. But without links those pages could get left in the dark. And pages with good search engine optimisation value can even lend some of it to other pages on your site. It's sometimes referred to as SEO-juice... Of course, it's only one part of the search engine optimisation process and crawling is just one way that Google finds websites. But it is still the primary way and Google still uses PageRank as a measure of authority. And authority is given a good leg up in search engine rankings.

What's PageRank?

You've come across backlinks in an example earlier. But how does Google's algorithm decide what page is a good fit for a user's search intent and which is less relevant? It's called PageRank. If you run a site you may have already had prospecting emails, where another site owner invites you to connect for mutual benefit... “Hi there. I noticed you sell baseball bats and I sell baseball cards. Would you consider linking to my site? I have loads of great content that your audience would love etc, etc...” But the reason people do this is to get that all-important backlink. These links are a sign of authority for the Google algorithm. It figures that if your site and content are good then other sites will want to be associated with it, therefore it must be a site worthy of being put front and centre in the search results. And Google would give more link weight when a backlink comes from pages with a better PageRank.

Or so it seemed...

A few years ago a thing called Google PageRank Toolbar existed and scored a site between one and ten for its page rank value (ten being the best). So in theory links from a site with a high page rank would see improved rankings because the links had come from authoritative sites. But Google stopped supporting it in 2013 and in 2016 got rid of it completely. And SEOs became divided between those that thought PageRank no longer mattered and those that thought it was still relevant, even if not visible. But it's perhaps easier if you think about PageRank in two separate ways – as a toolbar and as an algorithm. The PageRank algorithm isn't dead. But the toolbar is. And as Ahrefs notes about PageRank: "It was so effective that it became the foundation of the search engine we now know as Google, and it still is." And if you're interested, here's the algorithm from the original patent in 1997: We assume page A has pages T1…Tn which point to it (i.e., are citations). The parameter d is a damping factor which can be set between 0 and 1. We usually set d to 0.85. There are more details about d in the next section. Also C(A) is defined as the number of links going out of page A. The PageRank of a page A is given as follows: PR(A) = (1-d) + d (PR(T1)/C(T1) + … + PR(Tn)/C(Tn)) Note that the PageRanks form a probability distribution over web pages, so the sum of all web pages’ PageRanks will be one. … exactly!

'Ok Google.' What Else?

Above is just one element of the search engine optimisation process. Good search engine optimisation is everything from making sure the page titles and meta descriptions are good enough to inform and generate a click (and are the right character length) to directing internal links at your best content. You'll also need to look at the following areas. Some are on page, content tweaks some require technical knowledge.

- Authoritative content that answers the user's search query, earns backlinks and gets shared

- Keyword research and optimisation helps you get ranked for relevant queries

- The site needs a fast load speed and good user experience

- Not only spot on page titles and meta descriptions but a logical URL structure too

- Proper snippet and schema markup to stand out in results pages

Optimising For Search Intent

Does the page that's delivered on the results page align with the user's search intent? It's an important question. They could be looking to buy or maybe they're just looking to do some research? If you've optimised for a particular keyword but the searcher's intent is different, they'll leave your page before you know it. That's not very good for your site in search engine optimisation terms. There are ways to optimise for this and you need to look at what is being delivered by the search engine results pages.

Let's look at the basic types of intent:

Informational

Here, the user is looking for info – perhaps a quick answer or something more in depth. Remember too that people don't necessarily search for information as a question. For example: “What is SEO?” “Digital Marketing”

Navigational

Here, the user is looking for a specific site or page. For example: “Revive Digital” “BBC Sport”

Transactional

As its name suggests with transactional searches users are looking to buy and are looking for a place that sells the product they want. For example: “cheap garden furniture” “buy Ray-Ban aviator”

Commercial Investigation

With these searches the user is ready to buy or use a service but has not yet decided what the best answer is for them. For example: “best Indian restaurant in Essex” “Shark vs Dyson cordless hoover” Where featured snippets are shown, it's a good indicator that searchers are looking for information. But also look for the type of results delivered from the highest ranked sites. Are they blogs or product pages? Are they how-to-guides? Whatever theme is being delivered by the top ranking sites you can use it to make your own content relevant. If someone wants to buy, but you've delivered a very nice how-to-infographic you could end up with a frustrated user. And remember all search engines only want to deliver the best results for the searcher's query.

Mobile Friendly?

These days, most people use a mobile or tablet. And as the numbers of people searching on these devices increased Google, in 2016, gave a rank boost to those sites that took that into account. Then in 2018 Google changed to mobile-first indexing. Google used your mobile-page to decide your rank. What if you didn't have a mobile or responsive site yet? Not everyone did (but they got them fast when traffic started to drop).

Faster Loading Pages

Page speed is a ranking factor for Google. And a slow site will ruin your conversion rate. That means for it to load fast you'll need to strip out all the unnecessary elements and get images down to web-friendly file sizes. Do browsers run into a script when they build a Document Object Model on your site? Ok, improving site speed does get a bit technical but here are some basic ways to strip out slowness:

Compress Files

Reduce the size of your CSS, HTML, and JavaScript files that are larger than 150 bytes (if you use a tool to compress files don't include images. You should optimise images in other ways).

Optimise Your Images

Make sure your images aren't bigger than they need to be. Graphics are better in PNG format while you should choose JPEG for photographs. For buttons (Shop Now, Click Here) use a CSS image sprite to cut down on the number of server requests and save bandwidth.

Optimise CSS, JavaScript, and HTML

Optimise your code, such as removing unnecessary characters such as spaces and any unused code. Also don't forget code comments and formatting.

Cache, Cache and Cache Again

Optimise your cache so that your site loads stored pages or parts of stored pages where possible.

Redirects

Each time a page redirects to another page it slows down the loading. Reduce those redirects where you can.

Render-Blocking JavaScript

Google also recommends avoiding and minimising the use of blocking JavaScript.

Server Response

Your site speed is affected by traffic, what needs to be loaded, software and the hosting. Look at areas where responses get slowed down to improve the process.

Content Distribution/Delivery Networks

When the content is stored across multiple servers, rather than in a single place your users get faster access to your site's content. Finally, as part of its analytics Google offers a page speed insights report for your URL, with tips and improvement suggestions.

EAT: Great Website Content

EAT means expertise, authoritativeness and trustworthiness. These aren't actual ranking factors, but they first appeared in Google Guidelines in 2014 as something good sites should aim for if they want Google's attention. It's what your site needs to be in order to be considered as good by the world's biggest search engine. It's taken in context too. If you are an author of children's books you wouldn't be expected to write with such high authority as a medical professional writing about serious illness because the subject matter is less serious. According to MOZ Google employs around 10,000 evaluators to monitor the quality of websites highly ranked in the SERPs. Their reports feed into the engineers which then feeds into any algorithm updates to help deliver the best results for a search query. In 2018 Google made some updates to the evaluators' guidelines to include not just the content but the content creators too. That meant authors needed to be knowledgeable on the subject they were writing about. So things like the below can help:

- Author name

- Author bio

Just being an expert, and having an author bio on a page in itself isn't enough, but when you're creating quality content a bio helps Google see where else you are writing on the web and 'mentally' join it together. Content should be easy to read with simple language and short sentences. It should also link to relevant sources. So, Dickens, you'll need to ditch those big, wordy pages of text in favour of something that's web-reader friendly. But always think context. You should always keep pages relevant and highly focussed on the topic because what you have to offer is why people visit your site. And it's not just on page, make sure your topic is in:

- Your title tag

- The URL

- Image alt text (you should be adding alt text to all your images - Google's spiders can't read images, so you need to describe what the image shows)

And putting everything together a properly optimised page should have all of this:

- Relevant to a specific topic

- Your page topic in title tag

- The page topic in URL

- Page topic in image alt text

- Mention subject more than once throughout text

- Content that is unique

- A link back to its category and subcategory pages

- A link back to the homepage

Create the Best Content You Can

While the algorithms have changed in the past, and will change again (sometimes it's because people find ways of cheating the system, so Google needs to readjust) the fundamental things remain. Create good, relevant content that helps users, then optimise it. Make them want to share it. Get your site loading fast so users aren't waiting around and make the site easy to navigate. Make it logical for a user. You'll be rewarded with good rankings and high quality traffic that converts. Remember too, build your site for people and Google will repay you.